More and more frequently, professional photographers and aspiring amateurs express fear that we live in a time of image overflow. The situation is often described in language borrowed from the apocalypse: floods, waves, seas and torrents of pictures, the sum of which threaten to wash us away. For professional image-makers, this state of being describes a reality in which their work has been irrevocably devalued.

What is to be done? Given the ever-quickening pace of image production, it’s hard to imagine how this current might reverse itself. But what can be changed is the way that we frame this present condition. Rather than mourn the loss of the “decisive moment,” we can celebrate the richness and variety of the image flow that we find ourselves immersed in. More people create and look at photographs than ever before, making the medium increasingly present in our lives. Take, as a parallel example, the rise of mass literacy. Its growth did not endanger writers—rather, it created a wider audience that could appreciate the power of the written word.

Going forward, images—in their cumulative force—will continue to shape our understanding of the world. In this respect, image-professionals maintain an essential role in guiding our attention. Tomorrow’s photographer (and curator, and editor) will not only be venerated for making individual pictures, but also for how they give these pictures meaning, context, and force amidst the infinite stream of imagistic data that surrounds us. What matters, then, is not the number of individual pictures, but our ability to interpret these visual impulses.

When we shift the conversation from production to interpretation, something important changes. Rather than worrying about how many x billion photographs were uploaded to y social media platform in the past z days, we can instead start to think about how our images are being put to use.

In this light, a very different concern emerges. As you read this sentence, a rapidly increasing number of images are being generated—and more importantly, interpreted—without a human actor in the loop. The very existence of these images is a watershed development in the history of human vision. Up to this point, when we thought of an “image,” inherent in its idea was the presence of a human being to interpret it. But by coupling computer vision with artificial intelligence, human beings have been removed from this process. If we liken a camera to a self-driving car, from a photographic point of view it is an autonomous, self-propelled, image-making apparatus that produces millions of pictures and then acts upon those very same pictures to inform how it moves through the world.

The existence of this car is not the issue at hand, nor is the number of images that said car produces. The problem begins and ends with interpretation. We must ask ourselves: by which framework does the car view the world? What does its artificial intelligence see when it looks out at its surroundings, into a cascading tide of images? How is this autonomous machine understanding the pictures it is producing? How does artificial intelligence interpret what it sees and make meaning from it?

Self-driving cars are just one example among many where the interpretation of mass image production has real-world ramifications. In some cases, human-initiated photographs are taking on new, hidden meanings. For example, if you recently uploaded a picture of yourself or your loved ones to Facebook (or WhatsApp or Instagram), you did not simply share a bit of your life with your friends—you simultaneously gave up valuable visual, biometric data that can be analyzed in a myriad of ways, ultimately for political and commercial gain, as well as mass influence. Similarly, if you recently flew into the United States, you stood at the border and had your portrait taken by an unmanned camera—another image added to the database of population control and state power. But forget about the situations where tacitly agree and participate. The deeper problem lies in the vast majority of pictures that are produced by machines, for machines. In exponential leaps, this quantity of images has grown so vast that we must depend on artificial intelligence to read them for us and produce their own interpretations.

When photographs are made by humans, they reveal glimpses of the past and the present—reality as it was, and is. We can look at these pictures and use them to collectively decide what can be done to shape our future. In short, photographs help us interpret and make sense of the world. Today, images are taking on an even greater power: as countless pictures are directed towards artificial intelligence, human interpretation gives way to algorithmic prediction. Machines are looking at the past and present to determine our future with little input from us.

Among the many other tasks we are steadily relinquishing to machines, interpretation must not be one of them. Steadily, without our full recognition, we are giving up our ability to make meaning of the world and, in doing so, we are losing an essential part of what makes us human.

Invisible Images and AI Interpretability

Trevor Paglen is an artist and activist who is particularly concerned with the power of images, having spent his entire career challenging our vision of the world. From questioning our assumptions about government surveillance to calling out existence of covert operations or the “blank spots on the map,” Paglen takes nothing for granted. Through photography, sculpture, installation, investigative journalism, computer programming and other approaches, the overarching goal of his output is to show us things we are not meant to see, confronting the pervasive concealment of the realities that surround us.

The latest “blank spot” that has drawn his attention lies at the heart of contemporary artificial intelligence. When it comes to computer-aided image interpretation, we currently live in a world dominated by what Paglen calls “invisible images”—pictures produced by machines, for machines, with no humans in the loop. Invisible, then, from the human point of view.

Now, you might argue that the human role is present in the design of the machine, the construction of the algorithm, or the parameters of the programming. First of all, the specifics of each AI interpretation are proprietary and blackboxed, meaning that while human decision-makers exist, they cannot be held to account.

Even more worrying is our burgeoning awareness of the “interpretability problem”—the “dark secret at the heart of AI.” That is, we don’t know why AI is doing what it’s doing or how it’s reaching its decisions. From restaurant recommendations to medical diagnoses and military surveillance decisions, the latest generation of artificial intelligence has been taught to teach themselves, but not how to explain how they’ve reached their conclusions.

While the world’s top computer scientists are wrestling with this issue, we, as engaged producers and consumers of photographs, also have a role to play. Besides making photographs, we must thoughtfully engage with the process of interpretation, consciously wielding it to make sense of the world.

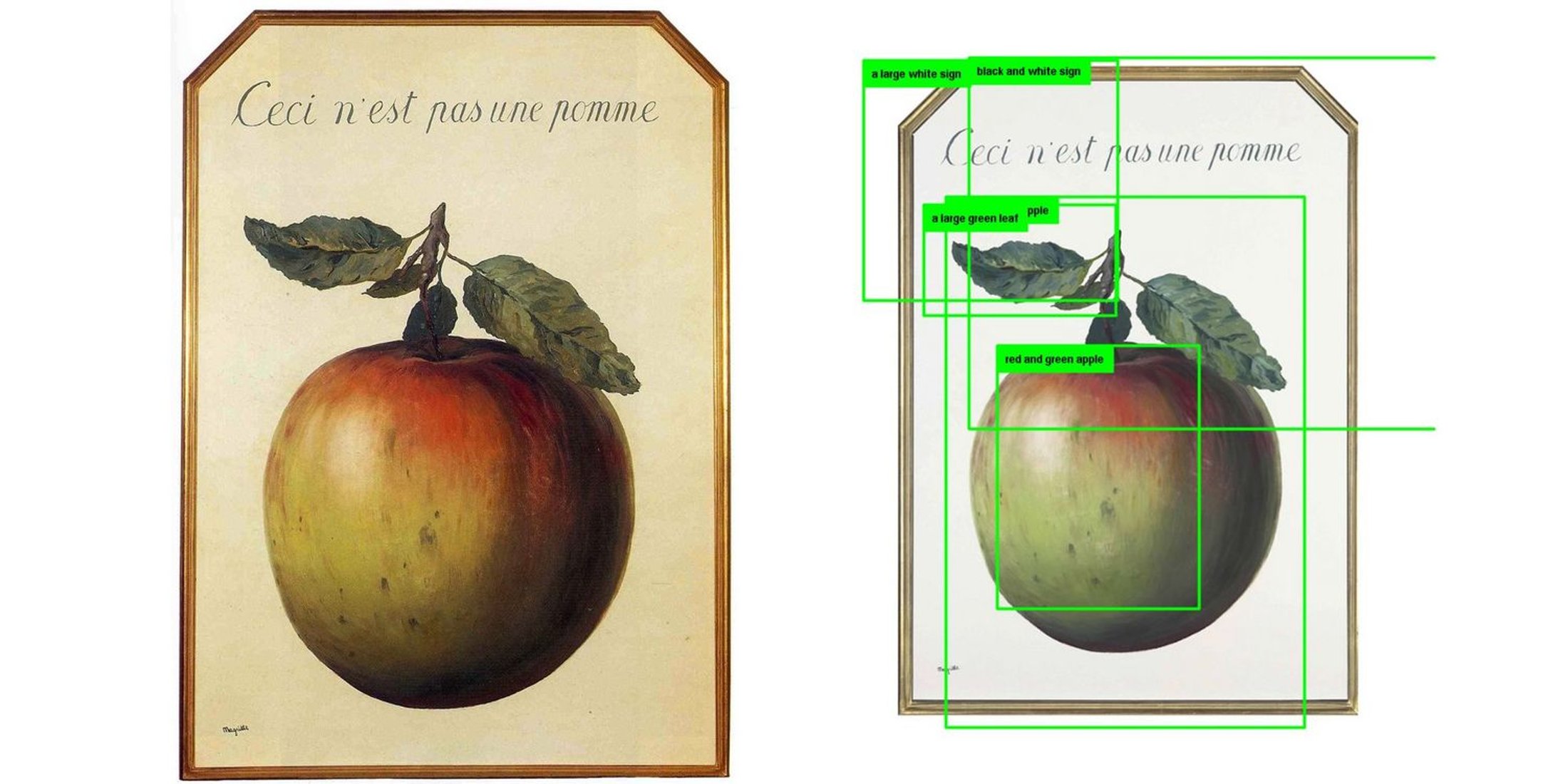

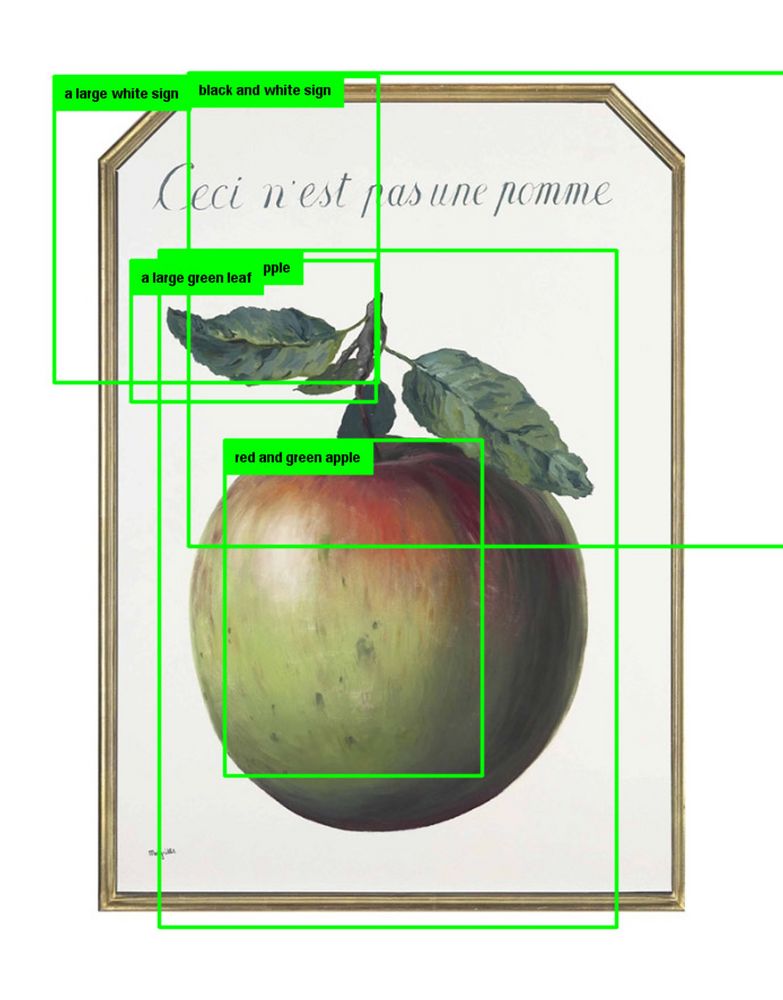

To examine this from an artistic perspective, consider one of Paglen’s most poignant examples, where he shows how artificial intelligence might interpret a picture of an apple. Given a simple image of the fruit, the computer is taught to look at and interpret the shape as “a red-green apple.” This deduction is efficient, yet static; it leaves no room for ambiguity or further elucidation.

But Paglen does not employ just any picture of a red-green apple. Nearly a century ago, the surrealist painter René Magritte challenged us to confront the “treachery of images” by writing “this is not a pipe,” and “this is not an apple” above and below images of those very objects. His deceptively simple work addressed the inherent fallibility of pictures long before the days of Photoshop and Deepfakes. By referencing this picture, Paglen reminds us that we continue to grapple with the complexities of interpreting of images—and that artificial intelligence is not programmed to untangle such niceties. For a machine, the object is either a red-green apple or it isn’t. Doubt and debate are not given space in a device that strictly makes quantitative decisions.

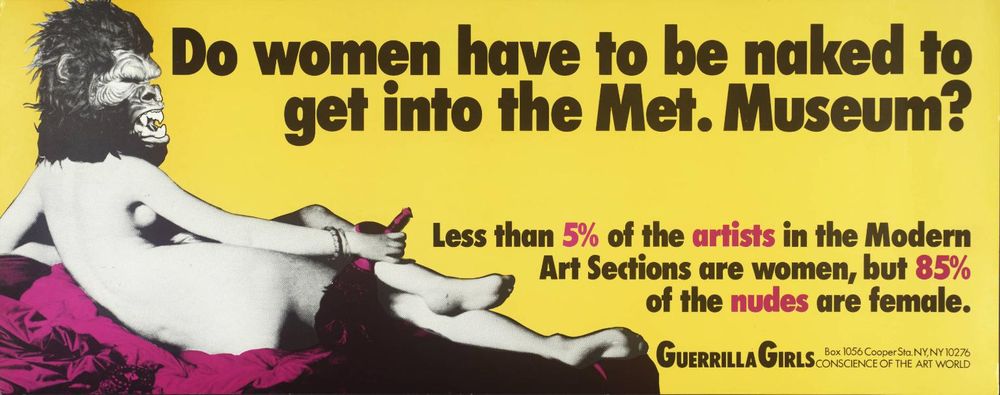

While doubt and debate are essential to the interpretation of images, they have become much harder to pursue in today’s cultural landscape. Consider another era, in the 1980s, when an anonymous group of feminist, female artists came together to fight sexism in the arts. The group, known as the Guerrilla Girls, protested in the streets, distributed flyers and ran billboards and bus advertisements, all to push their debate into the public sphere.

Look at the image above and think about what made their guerrilla actions possible: The Metropolitan Museum of Art is an accessible public institution that was, for some time, affordable for anyone who could make the trip. At any point, members of the public could walk inside, count the works of art, and raise their concerns. The analogous situation with machines is much less democratic. We do not know how the machines are being taught to see. We cannot count the number of images being fed into the machines that are made by men, or that show nude women, or that convey a biased presentation of race or sexual orientation. In short, nobody can walk into a neural network and create a guerrilla response to it.

These examples might strike you as interesting, or frivolous. Magritte and the Guerrilla Girls can question the power of interpretation while machines make rapid, clear-cut decisions—where’s the problem? Maybe we simply want efficiency from our AI. But as we hand over more and more tasks to machines, we can’t let ourselves be driven solely by logics that demand the most expedient solution and the most immediate interpretation.

To make this problem more concrete: look at how AI is used in prison sentencing. Proprietary, black-boxed algorithms are fed troves of data about the prisoners: their age, race, location, rates of recidivism, and more. From this, the machine offers a “risk score” for each individual. While the algorithms aim to focus on the individual in question, independent analysis indicates a significant racial basis for these results. Sadly, this should come as no surprise: after all, the system is being fed data from the past—a past dominated by systemic political, economic, and racist reasoning—to determine the future. This is just one example that Paglen cites when he explains, “I worry about this fixing of meanings.”

Indeed, for all its rhetoric of changing the world and ushering in a new era of productivity and possibility, artificial intelligence has a deeply conservative side. By analyzing the past to determine the present and the future, AI only offers us a fixed interpretation about what is possible (or what is likely) based on data from events that have already occurred. Along the way, we lose the ability to challenge our assumptions and come up with new frameworks. As Paglen reminds us, “One of the things that art has contributed to society is its ability to rearrange meaning.”

He goes on, “A human interpreting an image is highly contextual but does not strictly conform to the logic of capital or state/military power. But the logic of an AI will necessarily be driven by those forces, because those are the forces behind its creation. No one is developing these machines solely for the sake of creative or nuanced interpretation. These are money-making machines or policing machines; their interpretations are pre-determined by the constraint of having to offer a return on their capital. Yes, human interpretations might be influenced or biased in various ways, but at least we are not necessarily and solely driven by capital.

“Keep in mind: I’m not looking into the future, I’m looking into the present,” he says. “The problem is that an awareness of what is happening right now is not evenly distributed. But I know I’m just one person, with a single point of view. I hope other people can contribute their perspectives.”

Creating a New Vision

What can we do to fight back?

There are small things, of course. To start with a quotidian example, Paglen says, “I think it’s insane that people put pictures of their kids on Facebook. You’re giving sensitive biometric information to a very powerful company whose decision-making is driven by capital. We have some idea about how images are being interpreted now, but we don’t know how they’ll be used tomorrow. These images could be retroactively analyzed in ways that we have yet to imagine. We’re making permanent records and we don’t know how they’ll be used in the future.”

Before dismissing this fear as paranoid, consider China’s social credit scores, a truly Orwellian combination of total surveillance and social engineering that is scheduled to come online by 2020. In a country that will soon have 300 million surveillance cameras, this is no dystopian nightmare but a very imminent reality. But sure, if you don’t live in China, you can keep uploading pictures of your kids onto social media hoping that a system like this never comes to fruition in your own country. In the meantime, you will be passively assisting with the construction of a panopticon that could be weaponized at any moment. The most effective counter, then, is a proactive engagement with issues surrounding our rights to privacy, anonymity, and control over our personal data. This is today’s struggle, not one to be left for tomorrow.

Still, fighting back is no simple proposition. As Paglen tells us, the struggle requires “different answers at different scales. For example, there are people who have developed make-up that scrambles facial recognition software. It’s an interesting tactic, but politically, we can’t put much faith in such efforts. They are generally only effective against certain algorithms in certain circumstances. But remember: your image is already in the system. Even if the system is scrambled today, you have to develop a tactic that will also defeat tomorrow’s facial recognition software. That’s a game that the individual will always lose.

“More importantly, these are tactics stemming from a society from which we are trying to hide,” Paglen explains. “Instead, we need to create a vision of a world we want to see, a world we want to live in. That means we need policy frameworks and more thoughtful strategies that address these questions at a societal level. We’re living at a key juncture in which artificial intelligence and the automation of sensing (and interpretation) is becoming ubiquitous. We need to discuss what kind of role we want these interpretative logics to play. Right now, Google, Facebook, and Palantir are deciding those questions, and I don’t think that’s right.”

In short, we need to decide whether we prioritize privacy or efficiency. Anonymity is a public resource, and the erasure of it points towards a system of self-imposed control. Technology, which is meant to help us and make our lives easier, threatens to impose a crushing conformity. And at the center of this growing system is not a person or an omnipotent being, but a black box—an opaque system of interpretation that will increasingly govern our reality.

Paglen’s work, and this essay, are not an effort to stop the widening flow of image-production, nor are they a Luddite cry to smash the machines. People will continue to take photographs in ever-increasing numbers; in parallel, machines will produce many more of their own images while teaching themselves how to see the world. Both of those are unlikely to change. But what we can do is re-assert the centrality of the individual, human interpretation of these images.

Perhaps this sounds humble, idealistic, and even a bit hopeless. Interpretation, done on a human scale, will never be as powerful, moneyed, or far-reaching as the interpretations made by machines applied to the whole world en masse. But if we don’t maintain our capacity for interpreting images individually, we give up our ability to make meaning of the world. Without necessarily realizing it, we are signing over the active part of our consciousness—that is, our interpretation—to the machines, relegating ourselves back to the passive, unthinking realm of animals.

Encouragingly, some evidence of pushback is already manifesting, and perhaps even making a difference. For example, thousands of employees at Google helped create a public debate about a secret project with the Pentagon, which sought out ways to apply artificial intelligence to the task of interpreting video images that could then be used to improve targeting for drone strikes. This kind of “weaponized AI” might eventually place machine interpretation in the role of identifying targets and pulling the trigger, with no human intervention whatsoever. After intense internal arguments (and sufficient bad press), the project was called off.

The discussions at Google are a start, but as a society, we can’t let a single industry determine the frameworks of interpretation for the entire world. When we recognize that machines are being taught to see the world via the political and economic structures in which they were created, we understand that AI is not a neutral, objective arbiter, but a product of its context. Are we willing to let this context be cloaked in secrecy, or can we create the pressure to push these debates into the public forum?

As powerful as the forces are that build and feed the machines, the artist and the interpreter remain important figures. Now is the time to confront the importance of interpretation and think about how we, collectively, can maintain our role in making meaning in the world.

What do we dream of for our future? Do we want to live in a world of control or freedom? Should we only be driven by the logic of capital, or by the possibility of human development? Are we content to accept a regime of biased, opaque interpretation—of foreclosed possibility?

Alongside interpretation, one of the hallmarks of humanity, one of our cardinal qualities, is our capacity for hope. Paglen concludes, “We need to remember there is a possibility to make a more equitable world, with more beauty and more justice. I don’t want to discount that.” Much of the struggle for creating such a world will depend on our continuing ability to interpret images—not with a fixed certainty or unthinking efficiency, but an open, flexible perspective that could lead us to a different future.

—Alexander Strecker

Until January 6, 2019, the Smithsonian American Art Museum in Washington, D.C. is exhibiting a mid-career retrospective of Paglen’s work titled, Trevor Paglen: Sites Unseen.